How FinTech Platforms Are Rethinking AI Support Architecture

Explore how platforms are architecting governed AI agents to safely handle sensitive financial data and regulatory risks.

Financial data is among the most sensitive information people share with any platform. Mistakes carry regulatory consequences. A wrong answer about a fraud hold or a mishandled account dispute doesn't just frustrate a customer, it creates legal exposure, erodes compliance standing, and generates the kind of press that takes years to undo.

For most of the past decade, FinTech support teams resolved support issues the same way: resolve the easy stuff with automation, put humans on everything sensitive, accept the cost structure that comes with it.

That model is under pressure. Not because the stakes have changed, but because AI agents have matured to the point where putting a human on it is no longer the only way to handle complex, high-sensitivity interactions safely. The question has shifted from whether to automate to how to architect the automation so it can actually be trusted.

The FinTech Data Problem Is Structural

Every industry has sensitive data. FinTech's version is particularly acute because of how the data is structured, and how many different parties have legitimate but distinct claims on it.

Take a platform that serves both business account holders and their employees. The business owner has full visibility. An employee cardholder sees only their own card activity. Both are authenticated users of the same platform. Both might ask similar questions — why was this transaction declined? — but the data each is authorized to see, and the actions each is authorized to take, are fundamentally different.

Add a third layer: the platform itself, with internal operations teams, compliance functions, and fraud analysts who each need access to account data under entirely different rules.

This isn't a hypothetical edge case. It's the standard operating model for business banking platforms, lending platforms, expense management tools, and payment infrastructure providers. The data environment is shared. The access rules are not.

When AI agents enter this environment with broad access and without hard participant-level eligibility rules, the potential for cross-contamination is significant. Not because the agent is malicious. Because it's doing exactly what it was designed to do: find the most relevant information and use it to answer the question.

The problem is that relevant and permitted aren't the same thing.

What Happens When FinTech AI Gets It Wrong

The failure modes in FinTech are higher-stakes versions of the same patterns that appear across any multi-sided platform.

Regulatory exposure. Financial data access is governed by regulations that require data minimization, purpose limitation, consent, and auditability. An agent that surfaces account data to an unauthorized user isn't just a support incident, it's a potential compliance event. The model thought it was relevant is not an answer that satisfies a regulator.

Account holder trust. Financial services run on trust in a way most industries don't. The moment a user starts asking what else does this platform know about me, and who else can see it, you've created a problem no CSAT score captures.

Internal access control violations. Fraud analysts operate under different access rules than customer support. Compliance teams have different scopes than account managers. An AI agent operating across all of these functions without explicit role-based eligibility controls becomes a vector for internal access violations.

High-stakes decision errors. The interactions where AI agents are most useful — fraud disputes, payment failures, account restrictions — are also the interactions where an error has the most consequence. A customer who acts on wrong information about a fraud dispute resolution process creates downstream liability the platform is then responsible for.

The Rethink That's Happening

The FinTech platforms leading the architectural shift aren't abandoning automation. They're separating two things that have historically been bundled together: the AI agent's reasoning capability and its data access.

The insight is straightforward. An AI agent's ability to understand context, handle nuance, and navigate complex interactions doesn't depend on having access to everything. It depends on having access to the right things for the context it's operating in.

This leads to an architectural principle that looks obvious in retrospect: define what's eligible before the agent retrieves anything.

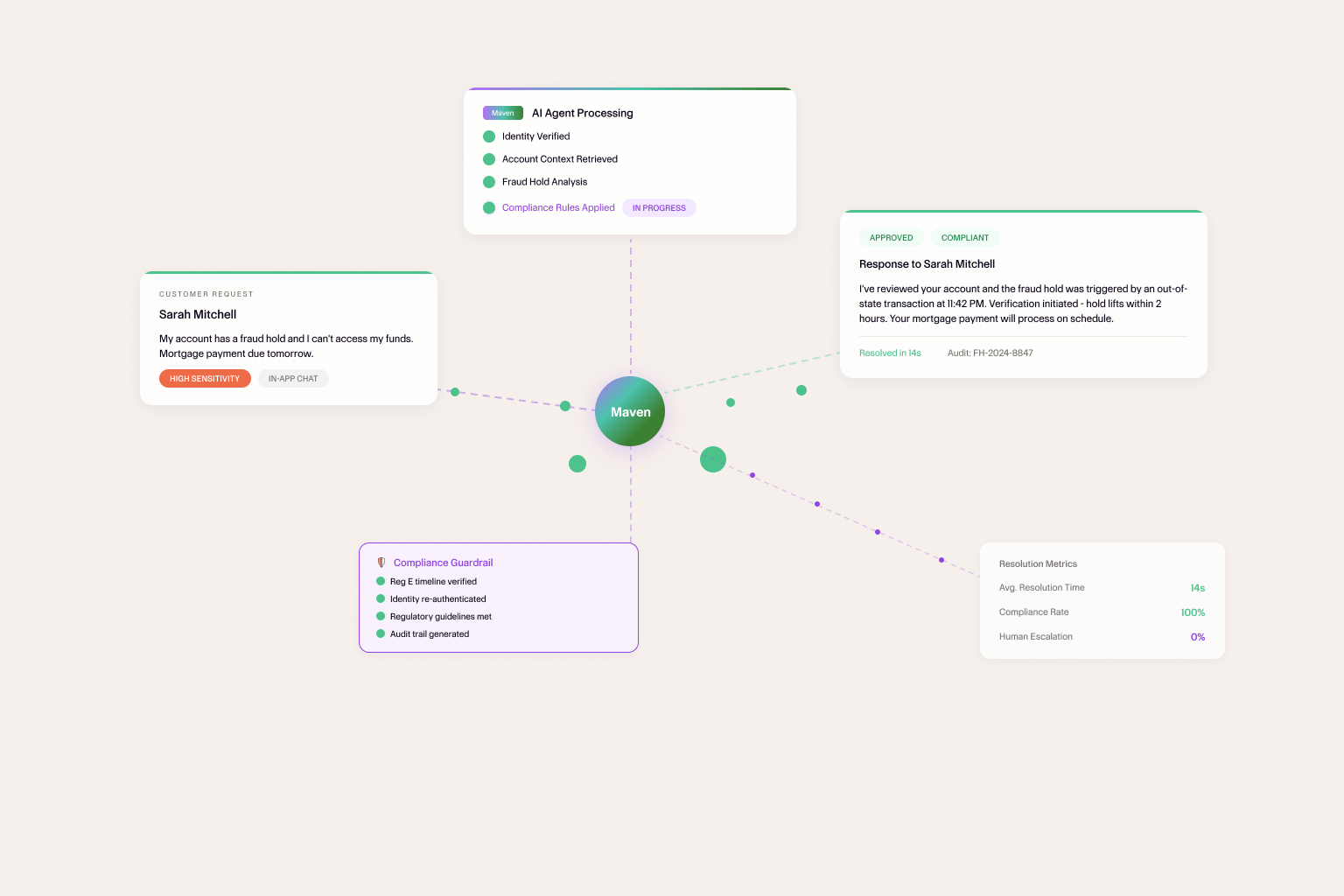

In practice, every interaction begins by establishing context — which surface, authenticated user, role, and policy environment is active. From those inputs, the platform determines what data is in scope and what actions are permitted. The agent then operates within that scope with full reasoning capability.

A business account owner asking about a team member's card activity sees what they're authorized to see. An employee cardholder asking the same question sees only their own data. A compliance analyst running a review operates under compliance-appropriate access rules. Same AI agent. Same underlying capability. Different eligibility contexts enforced at the architecture layer, before the agent reasons over anything.

This approach delivers three specific benefits that matter in FinTech.

Auditability. When every interaction is scoped by explicit eligibility rules, you have a clear record of what data was available to the agent for any given interaction. What data did the agent access? becomes a question you can answer precisely, not approximately — which is exactly what a regulatory inquiry requires.

Consistent access control enforcement. The eligibility layer doesn't depend on the agent remembering its instructions or correctly inferring context. It's enforced structurally, which means it applies consistently across every interaction, every edge case, every unusual input.

Scalable compliance posture. When new regulations arrive — and in FinTech, they always do — updating the eligibility rules in the architecture layer propagates immediately across all AI interactions. You're not re-prompting models or retraining systems. The rules apply everywhere, automatically.

The Role of Human Judgment Doesn't Disappear

One thing the architectural rethink doesn't change: there are interactions where human judgment is the right call, and a well-designed AI system makes it easy to get there.

In FinTech, these moments are predictable. A customer disputing a fraud determination the platform has already reviewed. A business account holder questioning a compliance hold. A high-value client with a complex multi-product question that involves judgment calls about exception policies.

These interactions benefit from Agent Maven handling information retrieval and context assembly, getting the human agent up to speed quickly, surfacing relevant history, flagging the key questions that need answering, all while keeping the final judgment and communication in human hands.

The escalation path isn't a failure mode. It's a designed feature of a mature AI support architecture. Building it deliberately, with clear triggers, smooth handoffs, and full context passed to the human agent, is what separates AI deployments that hold up under scrutiny from ones that create incidents precisely when the stakes are highest.

From Cost Reduction to Trust Infrastructure

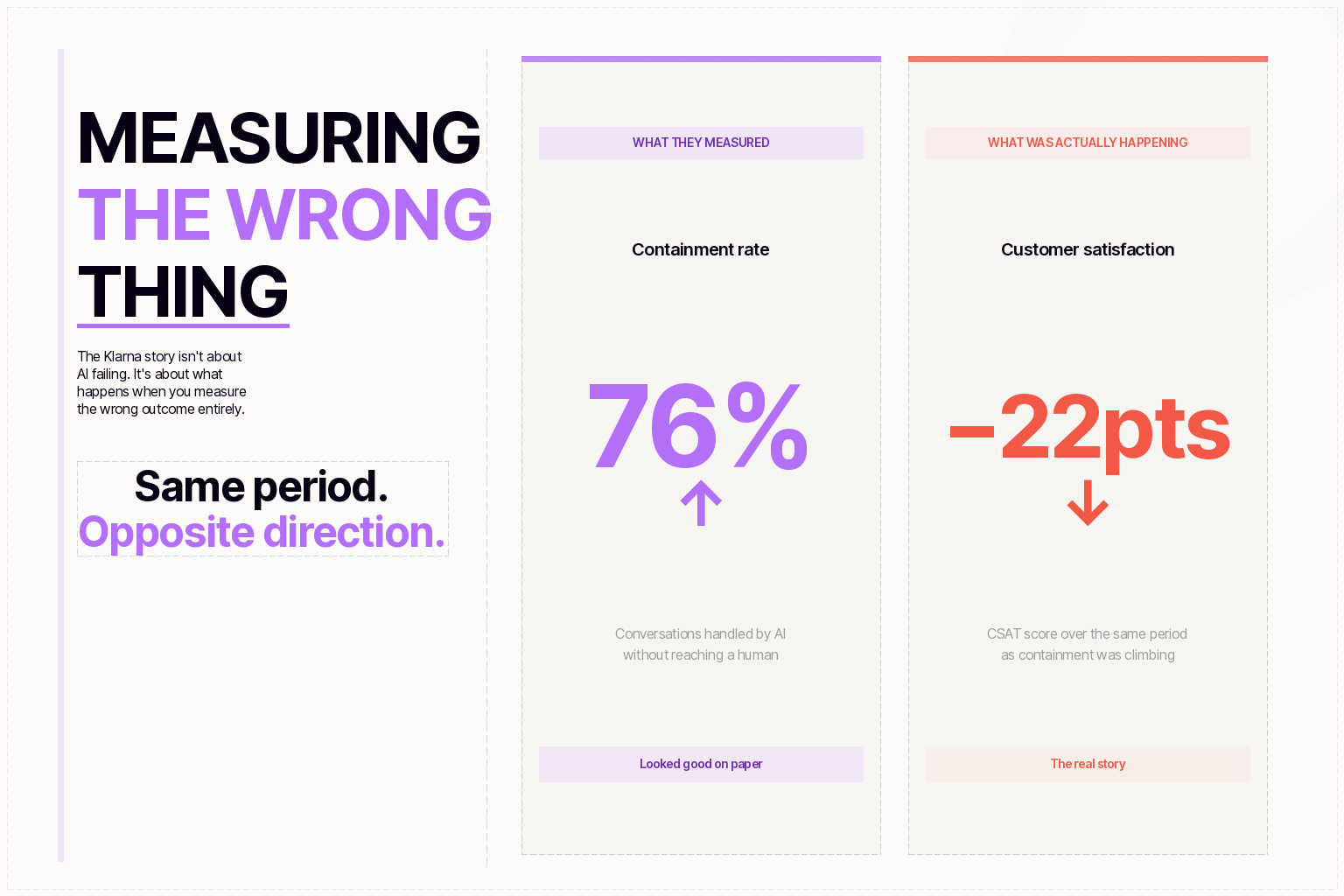

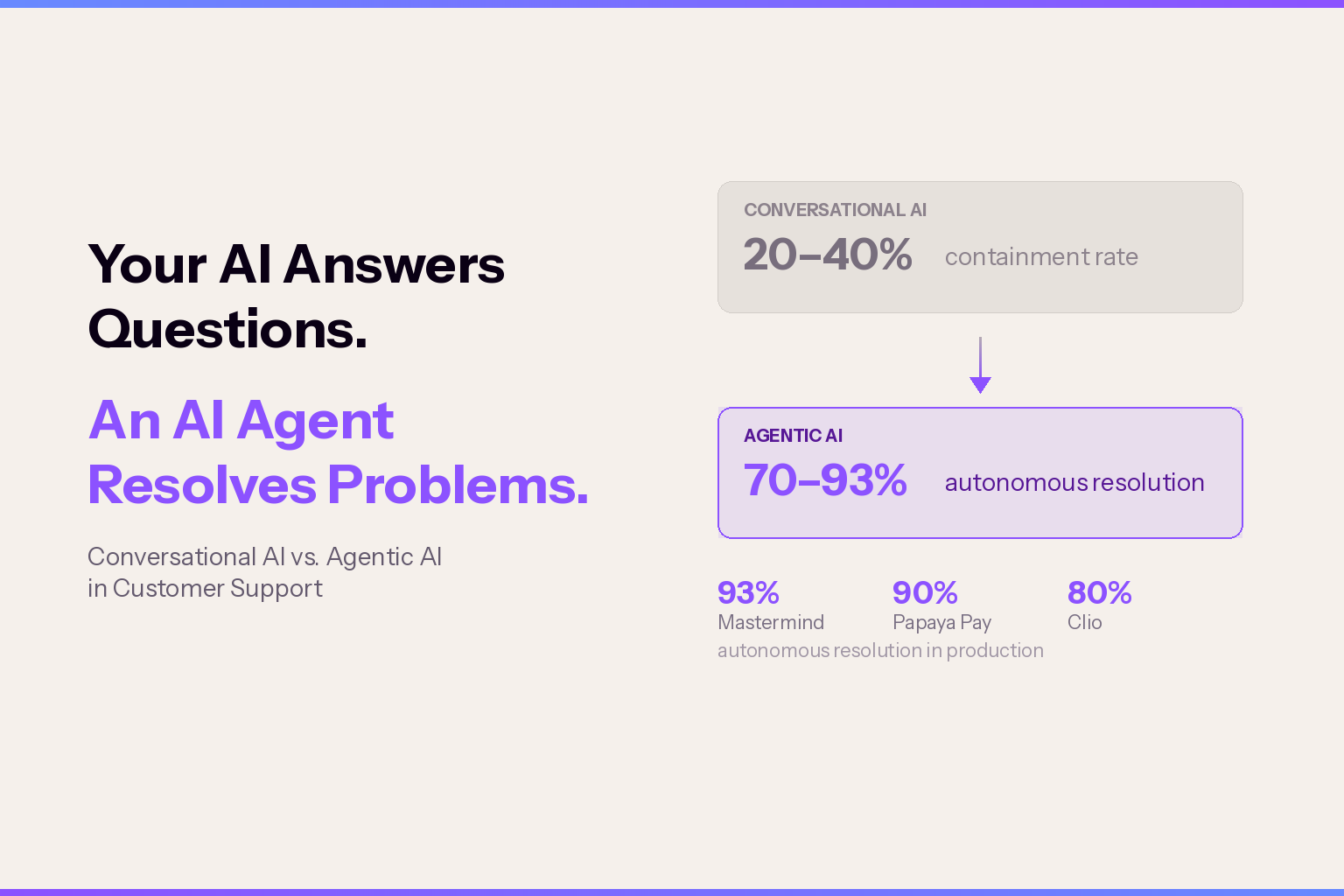

The early framing of AI in FinTech support was almost entirely about cost — deflection rates, ticket reduction, agent hour savings. These metrics are real and they matter, but they capture only part of what's at stake.

The FinTech platforms getting the most durable value from AI aren't primarily optimizing for cost reduction. They're building something harder to replicate: a support infrastructure that every participant group — customers, account holders, business clients, internal teams — can trust to handle their data appropriately and their interactions consistently.

That's a different design goal than deflection, and it requires a different architecture to achieve it. One where access control isn't an afterthought bolted onto a capable model, but a foundational layer that the model's capability operates on top of.

The platforms investing in that architecture now aren't just solving today's AI governance problem. They're building infrastructure that makes every future AI capability trustworthy by default, because the eligibility layer governing today's support agent will govern tomorrow's more capable one too.

In FinTech, trust isn't a feature. It's the product.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.