Two Years After Klarna’s AI Reversal: The Measurement Problem Nobody Talked About

The headline number was containment: how many customer conversations the AI absorbed before they reached a human. What you should measure instead.

In February 2024, Klarna's CEO announced that the company's AI did the work of 700 customer service agents. That quote ran in nearly every business publication. It became the stock citation for “AI is replacing knowledge work,” and CX leaders who had been cautious about AI found themselves being asked by their boards why they weren’t doing the same thing.

By May 2025, Klarna was quietly hiring humans back. By early 2026, the rehiring was well underway, the CEO publicly conceding the company had let cost override quality, and was paying for it in customer satisfaction.

The coverage treated this as a story about AI not being good enough. That’s the wrong read. The AI performed exactly as designed. That’s the actual problem, and the lesson it teaches is narrower and more useful than the one most people took from the headlines.

We wrote about the human cost of that mistake earlier this year. This piece is about something different: the measurement problem that made the whole thing possible.

Everyone measured the wrong thing

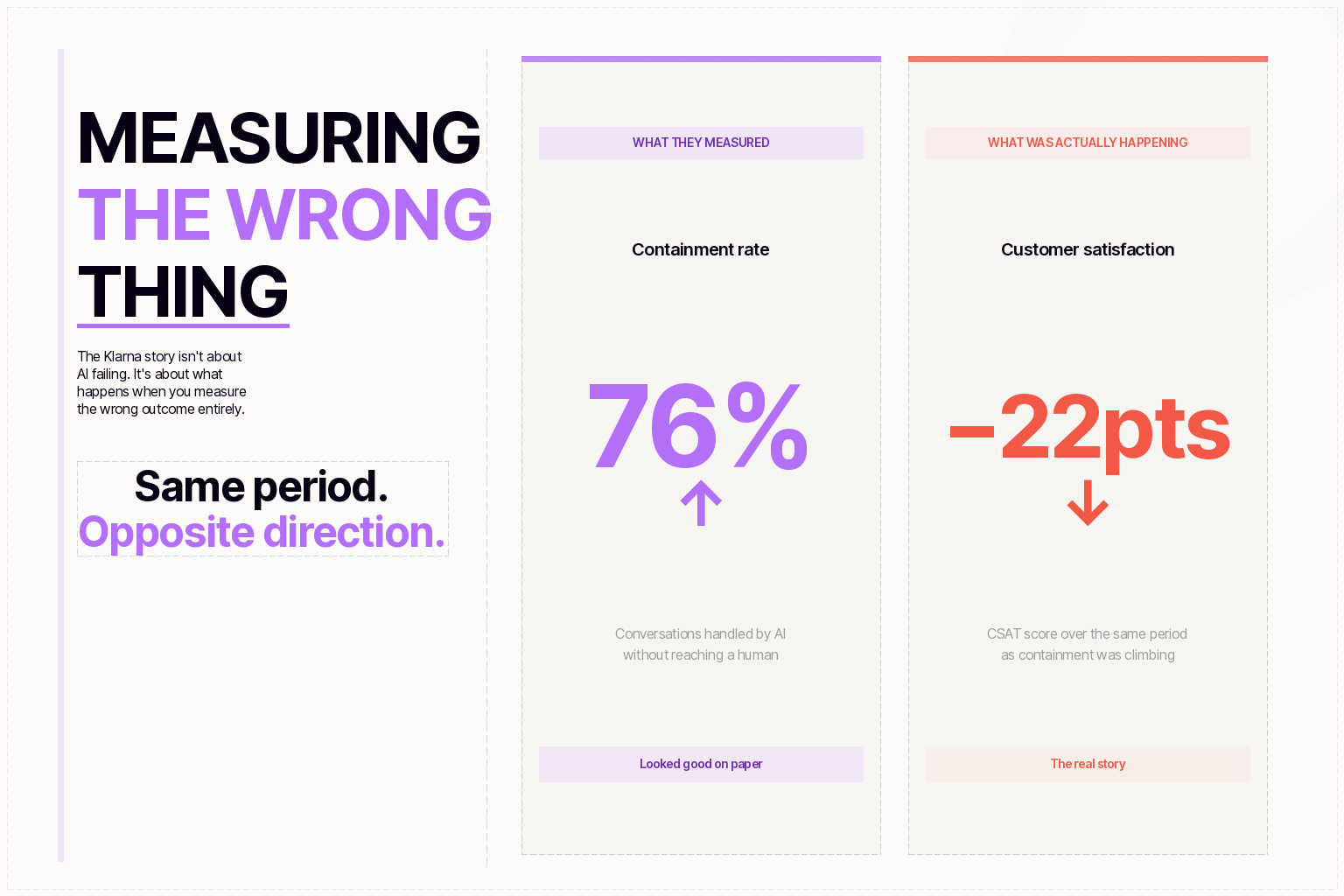

When Klarna made its original announcement, the headline number was containment: how many customer conversations the AI absorbed before they reached a human. Two-thirds to three-quarters of interactions, depending on the quote. That’s a striking figure. It’s also almost entirely disconnected from whether the customer’s problem was actually solved.

Containment measures whether the AI held the conversation. A customer who contacts support twice about the same issue counts as two containments if the AI handled both. A customer who accepts a wrong answer and gives up still counts. A customer frustrated enough to dispute a charge on their card, counts. The metric captured volume. It said nothing about outcomes.

Klarna’s AI was doing exactly what it was being measured on. The problem was the measurement. That distinction matters enormously for every CX leader evaluating AI vendors right now.

CSAT scores declined before Klarna’s volume metrics moved, which is exactly what you’d expect if containment and autonomous resolution were running in opposite directions. The signal was there. Nobody noticed the gap until the outcome had already gotten bad enough to surface in the numbers that executives actually watched.

Why containment became the default in the first place

Resolution is harder to define, which is most of why the industry defaulted to containment. Did the customer’s problem actually get solved? That question requires you to know what the problem was, whether the answer addressed it, and whether the customer came back. Containment just requires counting conversations that ended without a human.

The companies holding up in the current market built their measurement around those harder questions from the start. Repeat-contact rate, whether the same customer reopens a ticket on the same issue within 14 days, is the clearest resolution signal, and it’s the one most deployments skip because it requires tracking across conversations rather than within them. CSAT at the interaction level matters more than the aggregate. Escalations treated as continuous data about where the AI agent’s knowledge falls short are worth more than most teams realize.

Maven’s data across enterprise deployments shows the pattern directly. Customers running on containment metrics hit improvement plateaus. Customers running on first-contact resolution rates, deflection-to-resolution ratios, and repeat-contact frequency keep improving, because their feedback loop connects to the real outcome.

The structural decision that made the measurement problem fatal

Klarna reduced headcount before the quality degradation was legible in the data. The logic was defensible: the AI was handling the volume, so human capacity could scale down. But quality problems compound slowly. They show up in CSAT, then in repeat contacts, then in churn, then in revenue numbers that are by definition backward-looking. By the time the signal was visible, the buffer to absorb the recovery was already gone.

This is the part of the Klarna story that gets missed in the “AI failed” narrative. The technology didn’t fail. The deployment structure failed — specifically, the decision to reduce human capacity before validating that the AI was actually resolving issues and not just containing them.

The deployments that avoided this didn’t eliminate headcount first. They restructured it. Support teams shifted toward escalation handling, quality review, and customer success work, roles that require judgment the AI genuinely can’t replicate. The headcount reduction came from that restructuring, not from headcount-first thinking. The distinction seems subtle. The outcomes are not.

How enterprise buyers changed after Klarna

The story accelerated something that was already happening in procurement. The enterprise CX leaders buying today ask about first-contact resolution rates, not containment. They ask what happens when the AI is wrong, not just how often it’s right. They want repeat-contact data from live production deployments, not demo accuracy scores from a controlled environment.

That shift shows up in deal cycles in a concrete way. Vendors that can answer those questions with real customer data are moving faster. The ones still leading with containment numbers are getting harder questions and longer processes. The bar for “prove it” has moved. Klarna is a big part of why.

What deployments that held up actually look like

Clio evaluated 32 AI vendors and stress-tested 10 before deploying Maven. They’re resolving 80% of chat inquiries autonomously while closing 60% more tickets overall than their previous system. Their support team didn’t shrink, it shifted toward higher-value work. The metric they track is resolution, not deflection. Read the full story.

Papaya Pay, operating in regulated cross-border payments, reached 90% autonomous resolution. The compliance requirement, every interaction auditable, every escalation traceable, meeting SOC 2, HIPAA, and PCI-DSS standards, forced the kind of resolution discipline that most deployments skip past. It turns out that discipline is a feature, not a constraint.

Neither deployment started by asking how many humans they could cut. Both started by asking what “solved” actually meant.

What to do with this if you’re evaluating AI vendors now

Define resolution before you deploy anything. Decide what “solved” means, first-contact resolution, no repeat contact within 14 days, specific task completion, and make that the success criterion from day one. If your vendor can’t tell you how they’d measure it, that’s the answer. Our deflection vs. resolution guide walks through exactly how to set this up.

Track repeat-contact rate as a first-class metric. Put it on the dashboard alongside your standard CSAT. Use it as a feedback loop into the AI agent’s knowledge base and behavior. If the same customer keeps coming back about the same problem, that’s a resolution failure, not a new ticket.

Treat escalation data as a signal, not a workflow step. Every escalation tells you where the AI’s knowledge or authority falls short. The teams improving fastest use that data continuously. An enterprise AI agent platform should make this easy to surface, not something you have to dig for.

Require unified channel coverage from the start. A customer who calls, then emails, then opens a chat shouldn’t have to repeat themselves. Unified resolution across chat, voice, and email is what drives the outcome numbers, not single-channel automation that looks good in a demo.

Don’t reduce headcount before validating quality at scale. Structure the workforce transition alongside the technology deployment, not after it. The roles change, agents shift toward escalation handling, quality review, exception management, but the transition needs to be designed, not assumed.

Where this leaves us

Gartner’s prediction that 80% of customer service interactions will involve AI by 2029 still looks achievable. The past two years haven’t changed the destination. They’ve clarified which paths lead there and which ones lead to a public reversal and a rehiring announcement.

The companies that get to 80% autonomous resolution will be the ones that defined resolution correctly before they deployed anything, held the AI accountable to that definition, and built the workforce transition into the plan from the start. The ones that optimized for containment, cut headcount early, and measured conversations instead of outcomes, they’re the ones writing the cautionary tales.

The Klarna story isn’t a warning about AI capability. It’s a warning about what happens when a capable system is pointed at the wrong target.

If you’re evaluating AI customer service solutions and want a framework for comparing on the metrics that actually matter, our deflection vs. resolution guide walks through exactly how to build a business case that holds up. Or book a demo and see how Maven’s resolution numbers compare to what you’re running today.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.