Your AI Answers Questions. An AI Agent Resolves Problems.

The real difference between conversational AI and agentic AI in customer support, and why the performance gap isn't subtle.

A Gartner survey found that 64% of customers would prefer companies didn’t use AI for customer service. And honestly? They’re not wrong, at least not about the AI most of them have experienced. The chatbots that loop you through five menus and then suggest you call a phone number you already tried. The ones that mark your ticket “resolved” because you gave up, not because anything got fixed.

That’s the legacy of conversational AI in customer support: systems optimized for containing interactions rather than resolving them. But a different architecture is now in production. One that doesn’t waste time just chatting to customers, but actually takes action on their behalf. The industry calls it agentic AI, and the performance gap between these two approaches isn’t subtle.

Conversational AI Was Built to Deflect. That’s the Core Problem.

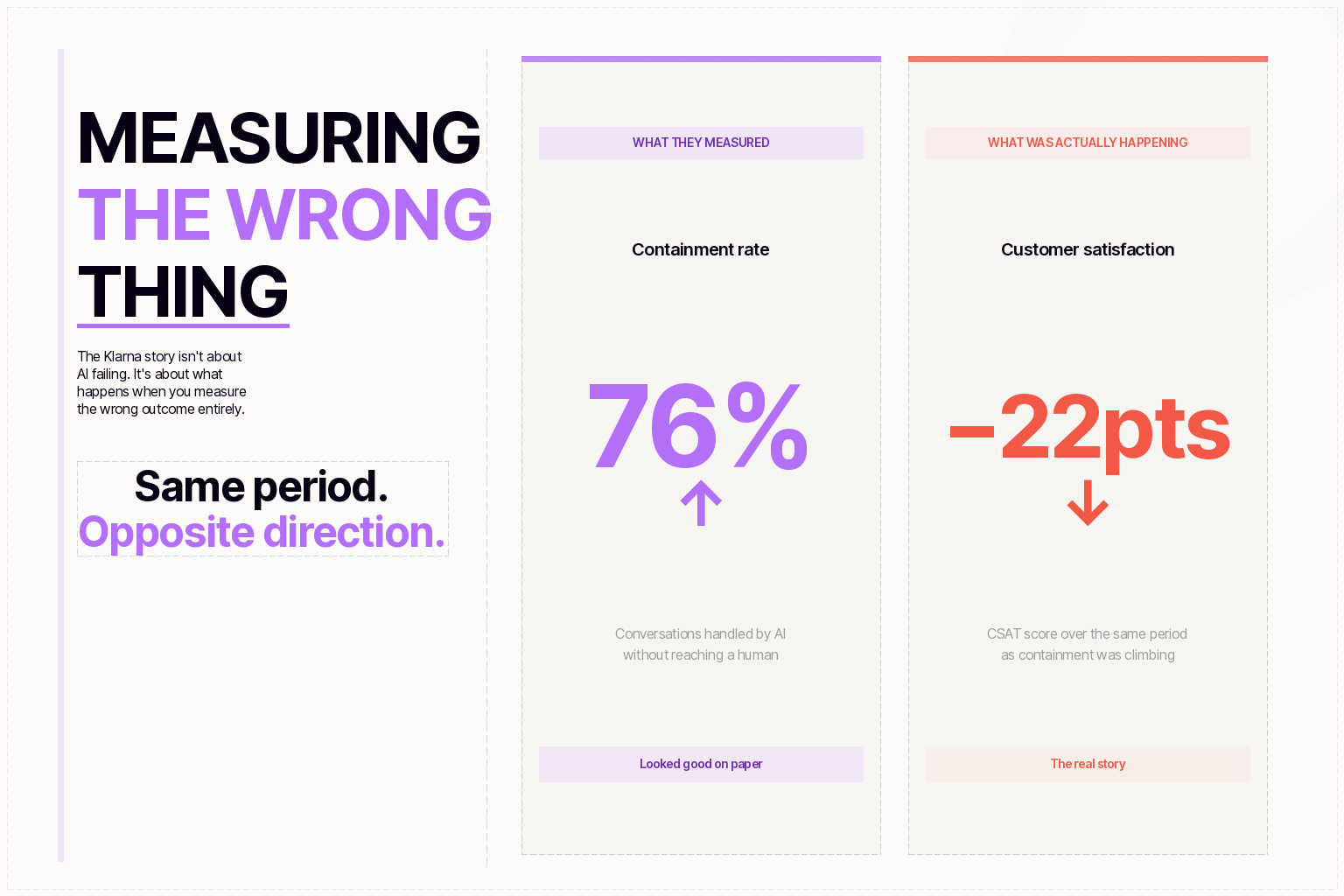

Traditional conversational AI, the category that includes most chatbots deployed over the past decade, was designed around a single metric: containment rate. How many interactions can you keep away from a human agent? The system could answer FAQs, surface help articles, and route tickets. What it couldn’t do was actually fix anything. No refund processing, account updates, or order modifications.

CNBC reported in April 2026 that many service chatbots still leave customers trapped in what they described as a maze of ambiguity. If you’ve ever measured your chatbot’s “deflection rate” and felt good about it, it’s worth asking: how many of those deflected tickets actually ended with a satisfied customer? Maven AGI’s own analysis of deflection vs. resolution metrics found the answer is often uncomfortable.

Agentic AI Doesn’t Answer. It Resolves.

The shift from conversational AI to agentic AI isn’t a feature upgrade. It’s an architectural change in what the system is designed to do. Analysts at every major firm have converged on the same distinction: conversational AI improves interactions; agentic AI improves execution.

Gartner’s March 2025 prediction that agentic AI will autonomously resolve 80% of common customer service issues by 2029 drew a clear line: conversational AI is reactive, handling bounded queries through dialogue. Agentic AI is proactive, autonomous agents that make decisions, execute multi-step tasks across backend systems, and complete workflows with minimal human involvement.

McKinsey’s framing emphasizes autonomy as the defining characteristic: these systems break broad goals into discrete tasks, decide execution order, retrieve and evaluate information, and initiate action. BCG calls it the new frontier in customer service transformation. The common thread across every analyst definition is a simple one: agentic AI doesn’t generate responses. It executes outcomes.

In practice, that means when a customer contacts support about a billing error, an AI agent accesses the billing system, cross-references transaction history, identifies the discrepancy, issues a correction, sends a confirmation, and logs the whole interaction. One session. No escalation. No “I’ll transfer you to a specialist.” (Unless, of course, escalation is necessary. Then it should be escalated with the full context of the previous conversation available to the human agent.)

The Performance Gap Is Already Measurable

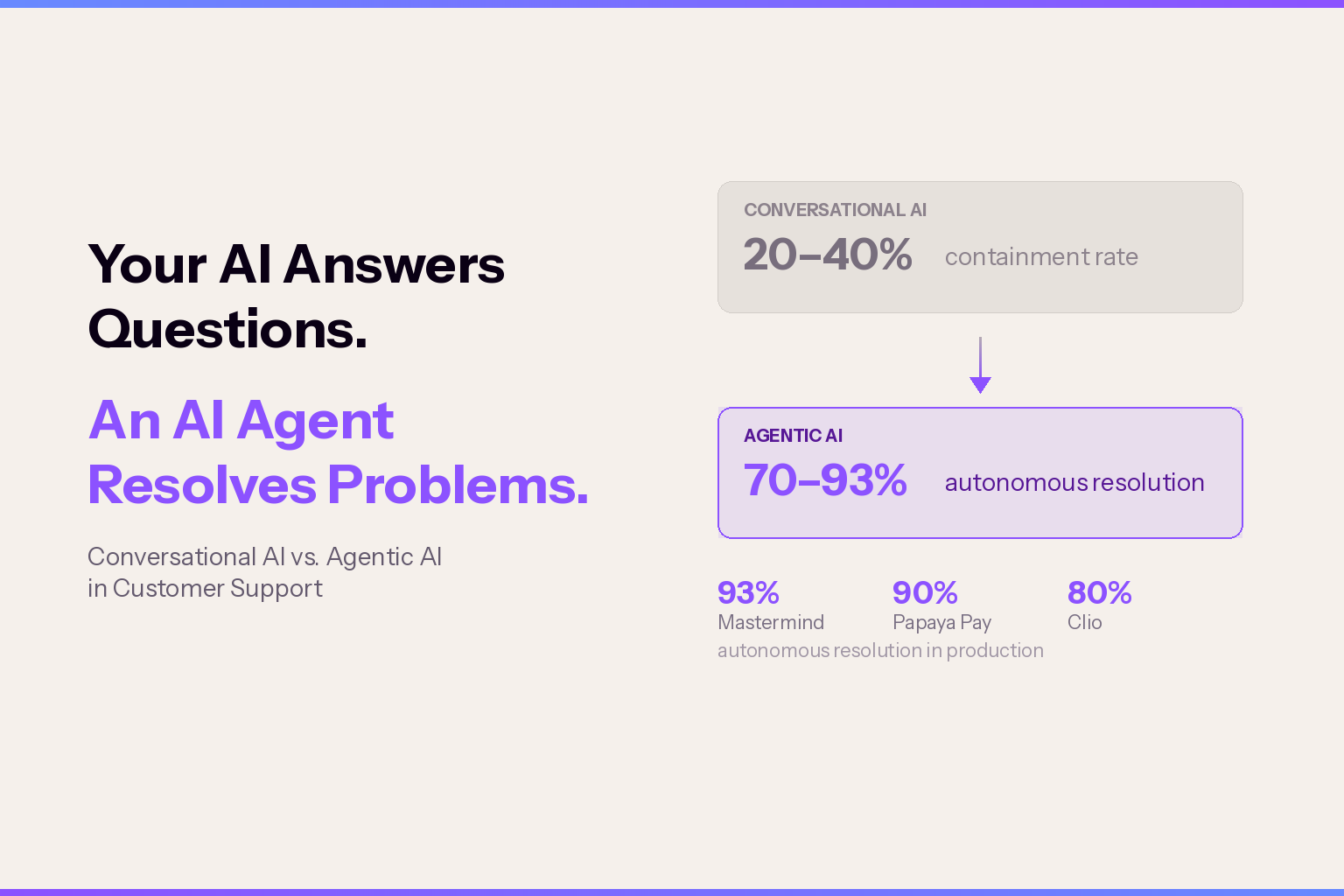

Early enterprise deployments are producing data that makes the distinction concrete. Traditional rule-based chatbots achieve 20–40% containment. LLM-integrated chatbots reach 40–55%. Well-implemented agentic AI systems are hitting 70–80% autonomous resolution, and Maven AGI’s customers see up to 90%+ resolution rates. And that’s resolution, not containment.

Maven AGI’s customer data backs this up. Mastermind reached 93% autonomous resolution in six weeks. Papaya Pay hit 90% in three weeks. Clio achieved 80% resolution with 60% more tickets solved. These aren’t pilot numbers from controlled environments, they’re production deployments handling real customer volume.

Gartner has projected that conversational AI will reduce contact center labor costs by $80 billion in 2026, and the enterprises seeing that ROI are the ones measuring autonomous resolution, not deflection. IDC and Microsoft data show $3.50 returned for every $1 invested in AI customer service, with top performers reaching 8x.

The Catch: Most Companies Aren’t There Yet

For all the momentum, the gap between early adopters and the rest of the market is wide. McKinsey reports that fewer than 10% of enterprises have scaled AI agents to deliver tangible value. BCG found only 28% have unlocked measurable business impact from generative AI in customer service. And Gartner itself warns that over 40% of agentic AI projects will be canceled by the end of 2027 due to escalating costs or unclear ROI.

The organizations seeing results share a pattern: they invested in data infrastructure and system integration before deploying agents. They chose platforms with an overlay architecture that connects to their existing helpdesk—Zendesk, Salesforce, Freshdesk—rather than demanding a rip-and-replace migration. And they measured success by customer outcomes, not by how many tickets they managed to avoid.

Forrester’s 2026 predictions are blunt: slightly more AI self-service efforts will fail than succeed this year, primarily because companies deploy under cost pressure without the integration work that makes resolution possible. The lesson from both the successes and the failures is consistent: agentic AI isn’t a chatbot upgrade. It’s an operational architecture change.

What This Means for Your CX Strategy

If you’re running a support operation today, the practical question isn’t “should we adopt agentic AI?” The analyst consensus on that is already settled. The question is how to sequence the transition without repeating the mistakes that turned first-generation chatbots into a punchline.

Three things matter most based on what’s working in production:

Measure what matters. Switch from containment rate to autonomous resolution rate. If your vendor can’t report true resolution, verified by backend system state, not just conversation closure, you’re still doing containment theater. Maven AGI’s approach to evaluating AI agents breaks down exactly what to look for.

Integrate first, deploy second. The deployment speed advantage of an overlay model, days to weeks instead of months, only works if the AI can actually reach your systems. That means API access to your CRM, billing, order management, and ticketing. Without read-write integration, you’re just building a more expensive FAQ bot.

Plan for the hybrid. Every successful deployment, across every analyst report, converges on the same operating model: 60–70% AI, 30–40% human. The goal isn’t to eliminate your team. It’s to free them from repetitive resolution so they can handle the complex, high-stakes interactions where human judgment actually makes a difference.

The window for getting this right is narrowing. The companies that move now, with the right architecture, the right integrations, and the right metrics, won't just outperform their competitors on support costs. They'll build something far more valuable: customers who trust that when they reach out, their problem actually gets solved. That's what agentic AI makes possible. The question is whether you're building toward it or still measuring deflection and calling it success.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.