78% of Enterprises Have AI Support Pilots. Only 14% Have Made It to Production. Here's Why.

Most AI customer service pilots look good in demos and stall in deployment. Here's what the data says about the gap.

Most enterprise AI support projects don't fail at the idea stage. They fail somewhere between a successful proof of concept and the moment the system has to handle real volume, real data, and real consequences.

A March 2026 survey of 650 enterprise technology leaders put a number on the gap: 78% of enterprises have at least one AI agent pilot running in a controlled environment. Only 14% have successfully scaled an agent to organization-wide operational use. That gap, 64 percentage points between 'we have a pilot' and 'we have a deployment', is where most AI budgets quietly disappear.

The reason it happens isn't usually the AI itself. The models have matured. The tooling has improved. The gap is organizational and operational: most enterprises aren't building for production when they build their pilots. They're building for the demo.

What a Demo Tests vs. What Production Demands

A pilot runs in controlled conditions. The data is clean, the volume is manageable, the edge cases haven't shown up yet. Someone on the team is watching every interaction. When something breaks, it gets fixed before it becomes a pattern.

Production is different. It runs at volume, on data that is often messy and incomplete, with no safety net on individual interactions. The edge cases that never appeared in the pilot start showing up constantly. According to a Gartner analysis covered by CTO Magazine, more than 40% of agentic AI projects are expected to be cancelled by the end of 2027: not because the technology doesn't work, but because of 'unclear business value, escalating costs, and inadequate risk controls.' Those are not technology failures. They're deployment architecture failures.

The organizations that successfully scale share a consistent implementation architecture even when their use cases differ. They don't discover this architecture during the pilot. They build it before deployment starts.

The Five Gaps That Account for Most Scaling Failures

The March 2026 survey identified five root causes that together account for 89% of scaling failures in enterprise AI agent deployments:

- Integration complexity with legacy systems. An AI agent that queries a static knowledge base performs reasonably well in a demo. An agent that has to reason across a CRM, a helpdesk, an order management system, and a billing platform in real time, and write back to those systems when it resolves something, is a different undertaking. Most pilots test the former. Most production requirements demand the latter.

- Inconsistent output quality at volume. Quality in a pilot is often sustained through supervision. When a human is reviewing every interaction, problems get caught early. At scale, that supervision disappears. If the agent hasn't been evaluated systematically on the full distribution of inputs it will encounter in production, including the unusual ones, quality degrades in ways that are invisible until customers start complaining.

- Absence of monitoring tooling. You can't improve what you can't see. Deployments that don't instrument agent performance from day one tend to plateau. The feedback loop between agent output and workflow design is what separates deployments that compound in value from deployments that stagnate.

- Unclear organizational ownership. Someone needs to own the performance of each agentic workflow, not just its technical operation, but its business outcomes. Without clear ownership, accountability diffuses. Problems that should be addressed in days take weeks. Improvements that should be obvious never get made.

- Insufficient domain training data. Enterprise data is never a single, clean source. It's fragmented across systems, inconsistent in structure, and constantly evolving. The moment you move from a controlled pilot to production, that fragmentation compounds. Agents that were reasoning accurately over a curated dataset start encountering contradictory documents, duplicate records, and outdated policies.

What Production-Ready Actually Means for CX

For customer support specifically, the Infor Enterprise AI Adoption Impact Index, which surveyed 1,000 business decision-makers across the US, UK, Germany, and France in early 2026, found that more than half of businesses struggle to scale AI beyond initial deployments. The barriers they cited are not capability gaps. They're execution gaps.

Production-ready means the platform can handle real ticket volume without quality degradation. It means escalations pass complete context to human agents rather than dropping the conversation history at the handoff. It means the governance infrastructure, audit trails, permission tiers, exception routing, was designed in from the start rather than retrofitted under pressure from an enterprise security review. And it means the integration layer connects bidirectionally to the systems where support work actually happens: the helpdesk, the CRM, the billing system, the knowledge base.

This is worth understanding if you're in the early stages of an AI customer support evaluation. The question isn't just whether the platform handles support volume. The real question is whether it was designed to run in production or designed to win a procurement process. Those are different design philosophies, and they produce different systems.

The Metrics That Tell You Whether You're on Track

Before any pilot transitions to broader deployment, there are three measurements that distinguish teams who are on a path to production from teams who are building a permanent proof of concept.

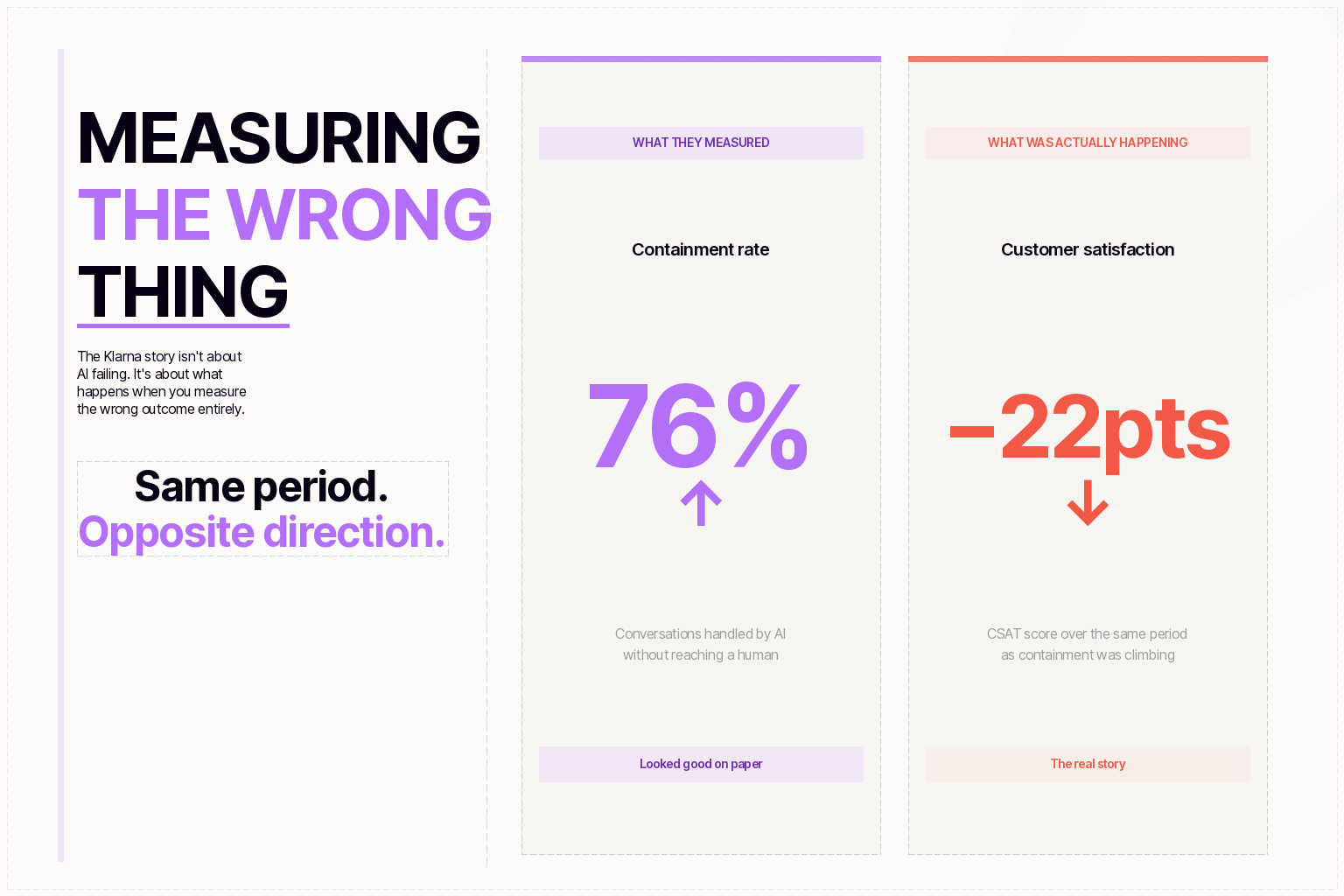

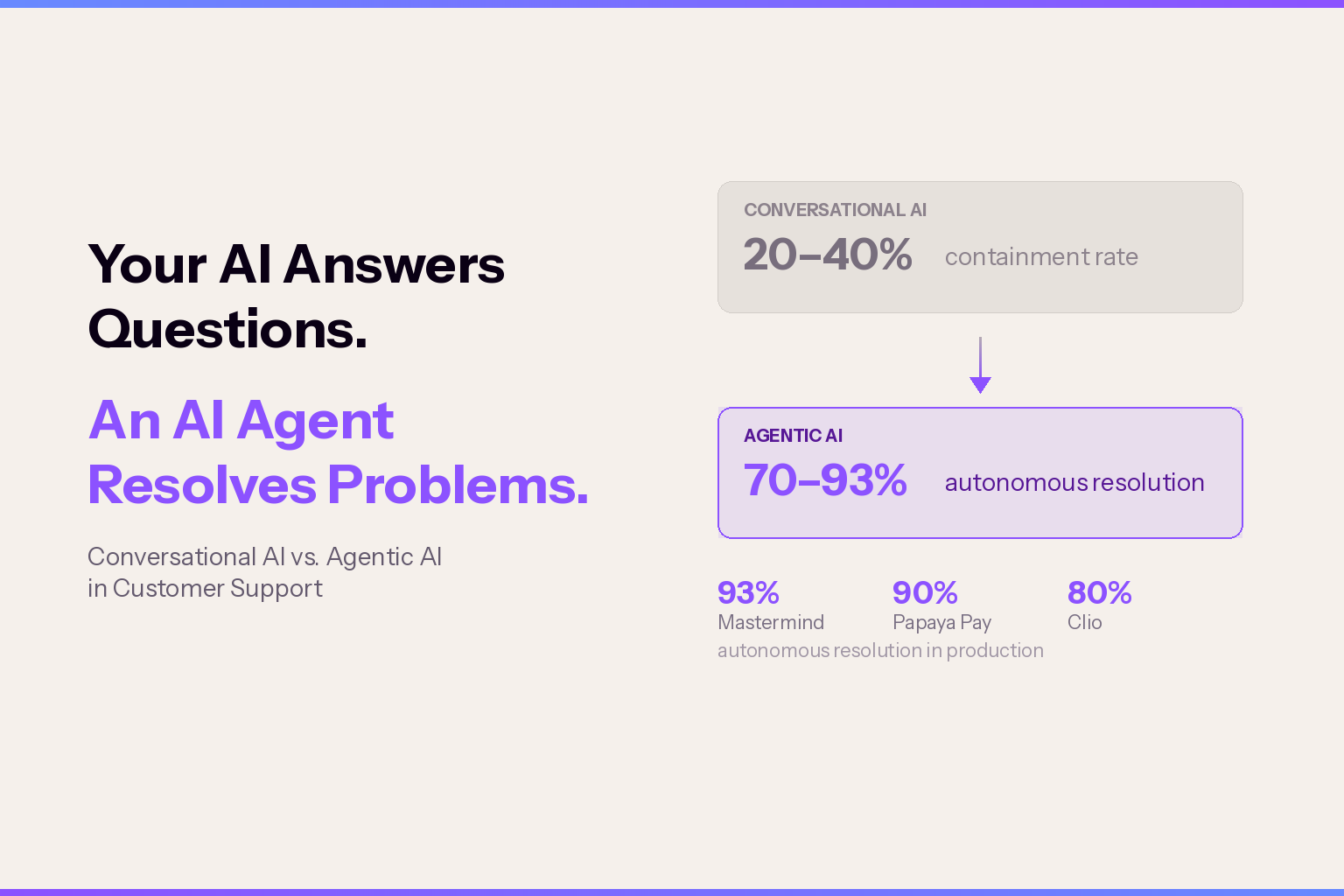

The first is autonomous resolution rate, not deflection rate. Deflection counts contacts the AI redirected to a human. Resolution counts contacts the AI closed. A platform can show strong deflection numbers while the underlying resolution rate is low enough that the economics don't justify the investment.

The second is the reopen rate. A contact that gets 'resolved' by the AI and then reopens as a human ticket within 24 hours wasn't resolved, it was deferred. Most platforms don't surface this metric by default, which means teams often don't discover the problem until it shows up in customer satisfaction scores.

The third is performance at the 90th percentile of query complexity, not the median. Pilots tend to be evaluated on average performance. Production deployments live and die on the edge cases. Evaluating a platform only on the queries it handles well is how organizations end up with a deployment that works for 60% of contacts and consistently fails the other 40%, usually the ones that matter most.

For a fuller evaluation framework, the post on how to evaluate AI agents for enterprise customer service covers the specific criteria that separate production-capable platforms from demo-optimized ones.

What Separates the 14% Who Scale

The enterprises that successfully move from pilot to production share a few consistent practices. They start with data readiness before agent deployment, auditing the sources the agent will rely on, establishing clean integration layers, and ensuring the information the agent accesses is current and complete enough to support reliable reasoning.

They build audit trail and permission infrastructure before expanding agent autonomy, not after. Enterprise procurement committees and legal teams require queryable records of every agent action. Deployments that can't produce this documentation stall at the point of broader rollout. Building it in from the start is substantially easier than retrofitting it.

And they pick a starting workflow based on volume and measurability rather than on what demos best. A high-volume, well-defined support workflow, password resets, order status, account changes, gives an agent enough inputs to surface quality problems quickly and enough data to improve systematically. Starting with a complex, low-volume workflow produces a deployment that performs inconsistently and is hard to evaluate.

The enterprise AI agent platform question isn't whether AI agents are ready for enterprise support. In 2026, the capability is there. The question is whether the deployment architecture, the integration depth, the governance infrastructure, the monitoring tooling, the organizational ownership, has been built to the same standard as the model itself.

Most pilots don't get that far. The ones that do tend to become the production deployments that earn renewals.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.