Deflection vs. Resolution: The Metric That Decides Whether AI Customer Service Works

Your AI vendor’s automation rate might be hiding the number that actually matters.

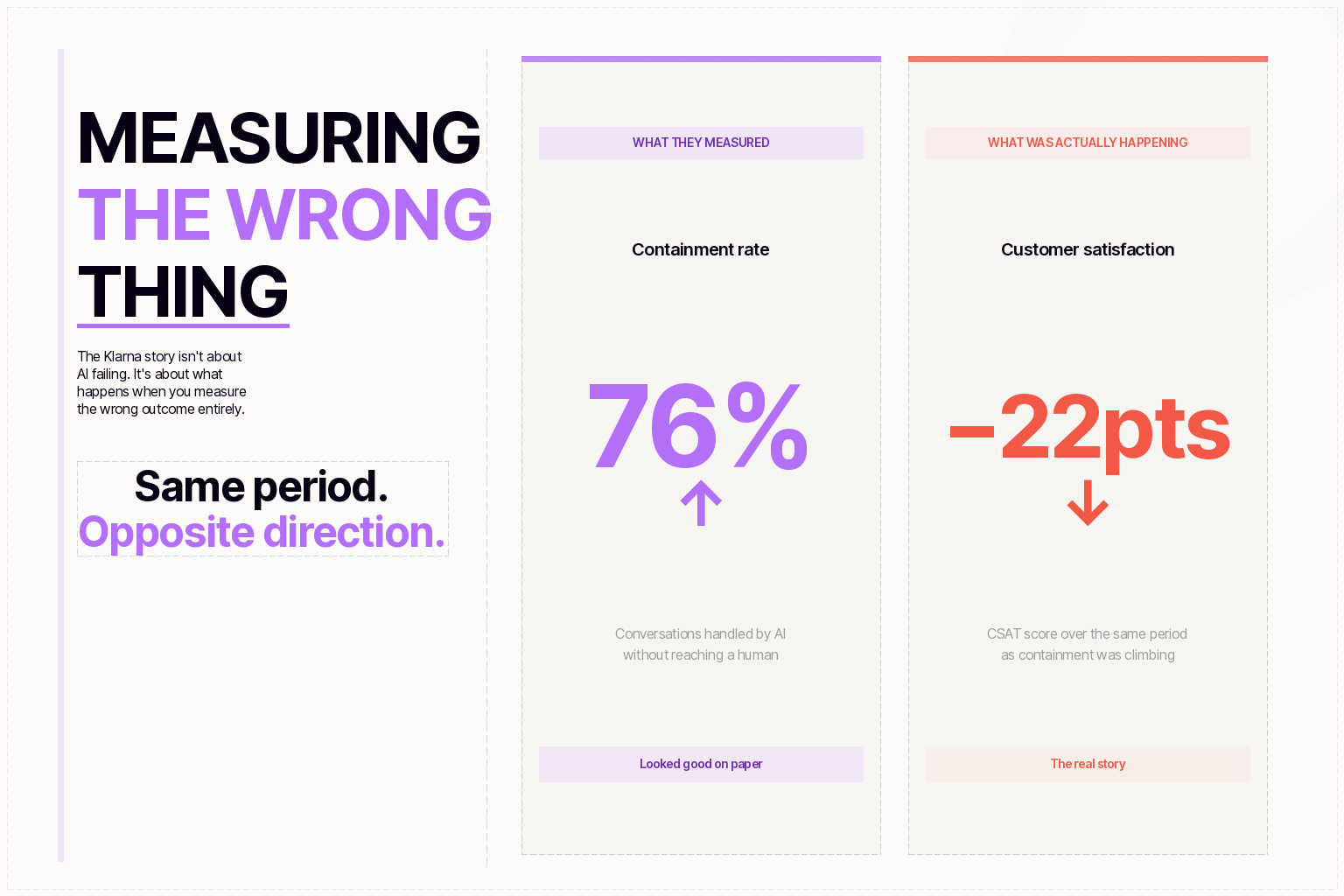

Nearly one in five consumers who have used AI for customer service say the experience provided no benefit at all. That finding, from the Qualtrics 2026 Customer Experience Trends Report, landed alongside a wave of vendor press releases touting 50%, 60%, even 80% automation rates.

Both things can be true at the same time. And the gap between them is a single word: deflection.

Deflection counts interactions the AI touched. Resolution counts problems the AI solved. The distance between those two numbers is where customers are getting lost — and where CX leaders are making expensive decisions based on the wrong data.

What deflection actually measures

When a customer submits a question and the system responds with a link to an FAQ article, most platforms log that as a successful deflection. The ticket never reached a human agent. The automation rate ticks up. The dashboard looks healthy.

But the dashboard doesn't know whether that customer found their answer. It doesn't know if they gave up, called back the next day, or quietly churned. Deflection measures what the AI did. It says nothing about what the customer experienced.

This isn't a new observation, but it's getting worse as AI deployments scale. A recent study by Ada and NewtonX surveyed 2,000 consumers and 500 enterprise CX decision-makers across three continents, and the gap was stark: businesses optimize for cost reduction and ticket deflection, while consumers value resolution above everything else. Resolution ranked seventh among the outcomes businesses prioritized. Seventh.

The same study found that 55% of businesses measure AI and human agent performance together, making it nearly impossible to isolate how the AI is actually performing. When you can't separate the signals, you can't tell whether your AI is resolving issues or just absorbing volume.

The math that makes deflection dangerous

Here's a scenario that plays out more often than most CX leaders realize.

Your AI handles 10,000 tickets a month. The vendor dashboard shows a 60% automation rate — 6,000 tickets handled without a human. Looks strong.

But dig into the data. Of those 6,000 automated interactions, maybe 75% were genuinely resolved. The customer got what they needed and didn't come back. The other 25% were deflected — the AI responded, but the problem persisted. Some of those customers tried again through a different channel. Some called. Some left.

Your actual autonomous resolution rate isn't 60%. It's 45%. And the 1,500 tickets that looked automated but weren't? They're still in your system, just disguised as re-contacts, escalations, or churn.

One framework gaining traction is what some analysts call Resolution Durability — measuring not just whether an issue was marked resolved, but whether the customer came back about the same problem within 72 hours. A high deflection rate paired with a high re-contact rate isn't automation. It's cost deferral.

What resolution looks like in production

Autonomous resolution means the AI fully solved the customer's problem without human involvement, without a follow-up ticket, and without the customer trying again through another channel. That's a higher bar than most vendor dashboards are set up to measure, but it's the bar that correlates with the outcomes CX leaders actually care about: reduced cost per ticket, improved CSAT, and lower churn.

Three things have to be true for an AI interaction to count as a genuine resolution.

The AI needs access to your systems — not just your knowledge base, but your order management, billing, CRM, and ticketing tools. A customer asking to change a shipping address doesn't need a link to your help center. They need the address changed. If the AI can read your systems but can't write to them, it can answer questions but can't solve problems. That's a sophisticated FAQ, not an AI agent.

The AI needs to take the action. Processing a refund, rebooking a flight, updating an account — these are the interactions that drive resolution. The gap between platforms that surface information and platforms that execute transactions is the gap between deflection and resolution.

The AI needs confirmation that the customer agrees the problem is solved. This is the piece most platforms skip. They close the ticket when the AI responds, not when the customer confirms the issue is handled. Some vendors are starting to adopt customer-confirmed resolution as a metric, and the data is encouraging — platforms that measure this way consistently see CSAT scores 15–20% higher than platforms that rely on automated ticket closure.

Five questions to ask during any vendor evaluation

If you're evaluating AI customer service platforms right now, the deflection-vs-resolution distinction should be the first filter. Here's how to surface it in a demo or POC.

How do you define resolved? If the answer involves containment, deflection, or automation rate without specifying that the customer's problem was fully solved, you're looking at a deflection metric wearing a resolution label. Ask them to distinguish between interactions the AI handled and issues the AI solved.

What's your re-contact rate within 72 hours? This is the metric that exposes false resolution. If 25% of resolved tickets generate a follow-up within three days, the effective resolution rate is materially lower than the headline number. Vendors confident in their resolution quality will share this number. The ones who hesitate probably haven't measured it.

Can the AI take actions in my backend systems, or just surface answers? Read vs. read-write integration is the architectural dividing line. An AI that can look up an order status but can't process a return is always going to have a lower ceiling on genuine resolution. Ask specifically about the depth of integration with your existing helpdesk, CRM, and order management tools.

How do you measure customer confirmation of resolution? Auto-closing a ticket after the AI responds is not the same as confirming the customer's issue was handled. Ask whether the platform uses customer confirmation, follow-up surveys, or re-contact tracking to verify resolution. The methodology matters as much as the number.

Are you willing to price based on successful resolutions? Outcome-based pricing aligns vendor incentives with customer outcomes. If the vendor only wins when your customers' problems actually get solved, they're building for resolution. If they charge per seat, per ticket, or per message regardless of outcome, their incentive is volume, not results.

The metric shift is already happening

The industry is moving in this direction, even if slowly. Gartner predicts that by 2029, AI will resolve 80% of common customer service issues without human intervention. Not deflect — resolve. The platforms built around deflection metrics will struggle to meet that standard because their architecture, their measurement infrastructure, and their pricing models weren't designed for it.

For CX leaders evaluating AI vendors this quarter, the deflection-vs-resolution distinction isn't a philosophical debate. It's a procurement filter. The vendors who measure resolution will build products that improve resolution. The vendors who measure deflection will build products that improve deflection. And your customers will know the difference long before your dashboard does.

If you're running an evaluation right now, start with the five questions above. They'll tell you more in fifteen minutes than a polished demo ever will.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.