Relevance Is Not Permission: The AI Access Problem No One Talks About

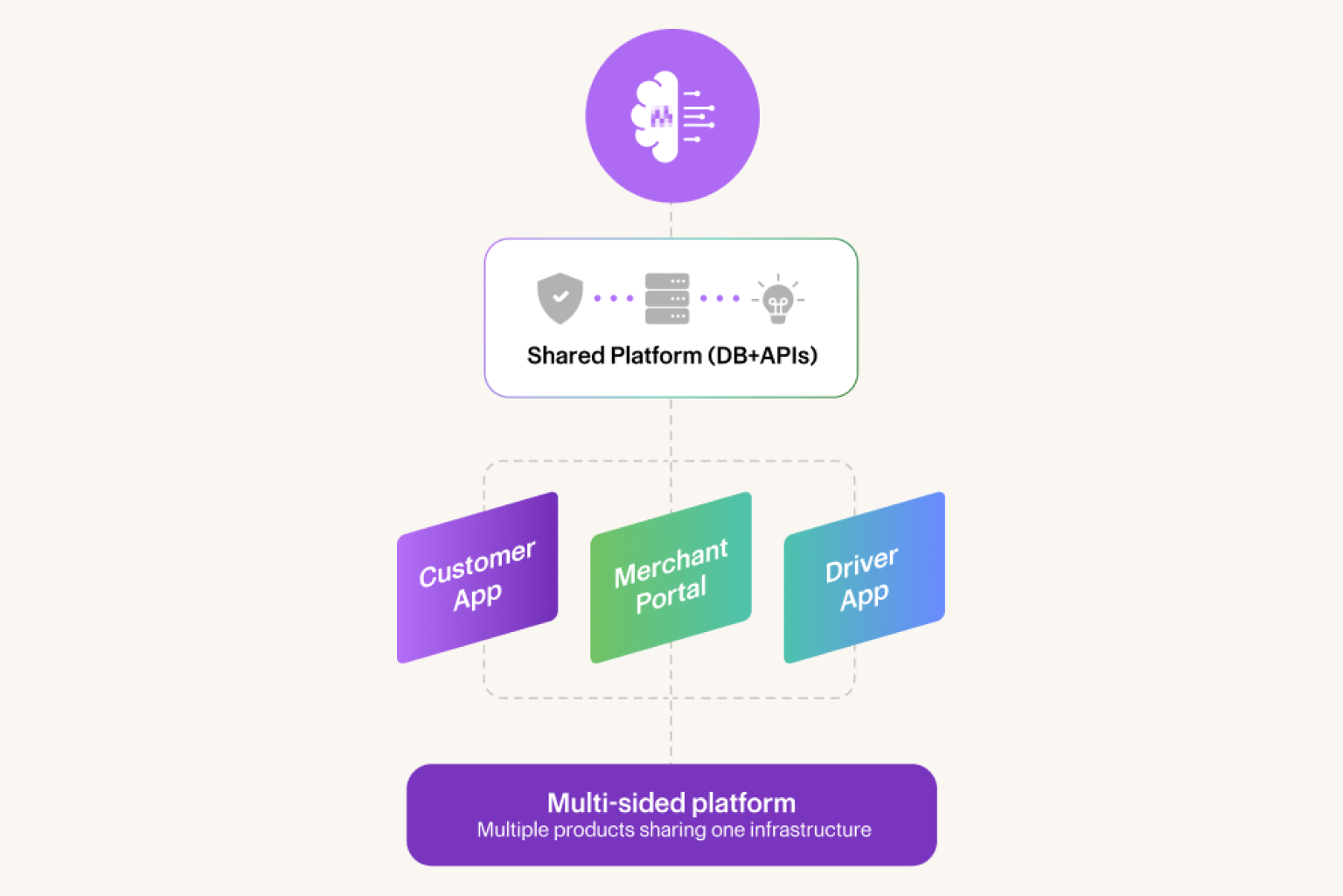

Most AI agents assume relevance equals permission. On multi-sided platforms, that assumption breaks trust.

There's an assumption baked into most AI agent design that goes largely unexamined: if data is relevant to answering a question, the agent should be able to use it.

It sounds reasonable, but it's wrong.

For organizations running platforms with multiple participant groups — customers and merchants, riders and drivers, clients and service providers — that assumption is what quietly turns AI deployments into trust problems.

The Relevance Trap

A customer contacts support about a late delivery. They want to know what happened and ask for a refund.

The most relevant information includes the restaurant's average prep times, the specific prep window on that order, the driver's routing history, and any flags that appeared during fulfillment. All of it is directly useful and produces a better, more accurate answer.

It would also surface a restaurant's operational performance data to a customer who has no business seeing it. It would implicate a driver's behavior without due process. It would draw a direct line between one side's outcome and another side's data, without either party's knowledge or consent.

The agent didn't make a mistake — it did exactly what it was designed to do: find what's relevant and use it. The mistake was assuming that relevance and permission are the same thing.

Why This Distinction Is Harder Than It Looks

On a single-sided product, relevance and permission mostly align. The user asking the question is also the person whose data is in scope. The gap between what's useful and what's allowed is narrow enough that most agents navigate it without incident.

Multi-sided platforms break that assumption entirely.

Every participant group has its own data, workflows, expectations of privacy, and definitions of a fair outcome. When an AI agent operates across all of them from a shared knowledge base, it isn't navigating one data environment. It's navigating several simultaneously without hard boundaries between them.

Three failure modes emerge consistently:

Data leakage across surfaces. Information relevant on one surface belongs to a participant on another. A customer agent that can see merchant dispute history, a merchant portal that surfaces customer complaint details, or a driver interface that references platform-level enforcement data.

Actions that create unreviewed consequences elsewhere. A refund issued on the customer surface triggers a payout deduction on the merchant surface. An AI agent that can take the first action but can't see the second consequence will make reasonable-sounding decisions that create problems it never encounters. The feedback loop doesn't close.

Inconsistent treatment by participant group. The same underlying question — why was my payment reduced? — means something fundamentally different depending on whether a merchant or a driver is asking it. The eligible data, the appropriate response, and the allowable actions are all different. An agent without hard surface boundaries generates answers that are technically accurate and contextually wrong.

None of these are the AI agent's fault — they're architecture failures. The agent was never told where the lines were.

Relevance Is a Starting Point, Not an Authorization

Here's the reframe that matters: relevance should inform what the agent looks for, but eligibility should determine what it can actually access.

Two separate questions, answered in sequence.

Eligibility comes first. Before an agent retrieves anything, the platform needs to have already answered: which surface is this interaction coming from? Which participant group is authenticated? What data and actions are in scope for this surface, this role, this policy context? What's categorically off-limits, regardless of how relevant it might be?

These aren't questions for the model to reason through. They're constraints the platform enforces deterministically, before the agent starts. Relevance then operates within those constraints, not in place of them.

The practical effect is significant. An agent scoped to the customer surface can't accidentally surface merchant data. Not because it's been instructed not to, but because that data was never eligible in the first place. Prompt instructions can be reasoned around. Eligibility constraints can't.

That distinction matters more than it might appear. Shouldn't use and can't access are not the same level of protection. At scale, in complex environments, with real edge cases, only the second one holds.

What This Means in Practice

Platforms that get this right don't build more restrictive agents — they build better-architected ones. The agent's reasoning capability is unchanged; what changes is the surface it reasons on top of.

The result isn't a less capable agent. It's an agent that can be trusted across every participant group simultaneously. On multi-sided platforms, that's the capability that scales.

Relevance gets you to a good answer. Eligibility gets you to a trustworthy one.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.