How to Evaluate AI Agents for Enterprise Customer Service

A vendor-neutral framework for CX leaders who are tired of polished demos and want production evidence.

The enterprise AI agent market has matured to the point where there's no shortage of vendors claiming strong results. Proof-of-concept environments regularly produce the headline numbers — 80%, 90% automation rates — and yet deployments that maintain those results in production are considerably rarer. The gap is predictable if you know what to look for, and it's largely invisible if you're running a standard evaluation.

This guide is built around the questions that distinguish platforms built for production from platforms built to demo well.

Start by understanding what you're actually evaluating

Most vendor evaluations start with a demo request and end with a reference call. That process was designed for software that does what it's configured to do. AI agents are different: their performance in a controlled demonstration often has limited predictive value for production performance. The variables that matter most in production — query distribution, edge case frequency, integration reliability, behavior under load — are exactly the variables that demos are set up to avoid.

Before you run any evaluation, it's worth getting clear on three things.

What does resolution mean for your use case? Not deflection, not containment — resolution. The customer's problem was solved, they didn't come back about the same issue, and the AI took the action without needing a human to complete it. If you can't define this specifically for your product before the evaluation starts, any benchmark the vendor provides will be hard to interpret.

What does failure look like? An AI agent that handles 80% of interactions well but mishandles the other 20% in ways that damage trust is not a deployment win. Knowing what your failure modes are — wrong refunds, incorrect account changes, escalations that lose context — lets you build those into the evaluation rather than discovering them post-launch.

What's your integration reality? The performance gap between sandbox and production almost always traces back to integration. An agent evaluated on a sample of your knowledge base will behave differently than one connected to your live CRM, ticketing system, and billing platform. The earlier you can test with real system access, the more predictive the results.

The questions that surface production capability

Standard vendor evaluations tend to cluster around features and pricing. These questions are designed to surface something more specific: whether the platform is architected for how enterprise support actually works.

How do you define autonomous resolution, and how do you measure it? The answer tells you more than any benchmark. Vendors with genuine production experience have specific definitions and specific measurement methodologies. They distinguish between tickets closed by the AI, tickets deflected to self-service, and tickets that reached a human. Vendors optimizing for demo performance tend to conflate these. Ask them to walk through how a resolved ticket gets classified versus a deflected one in their system.

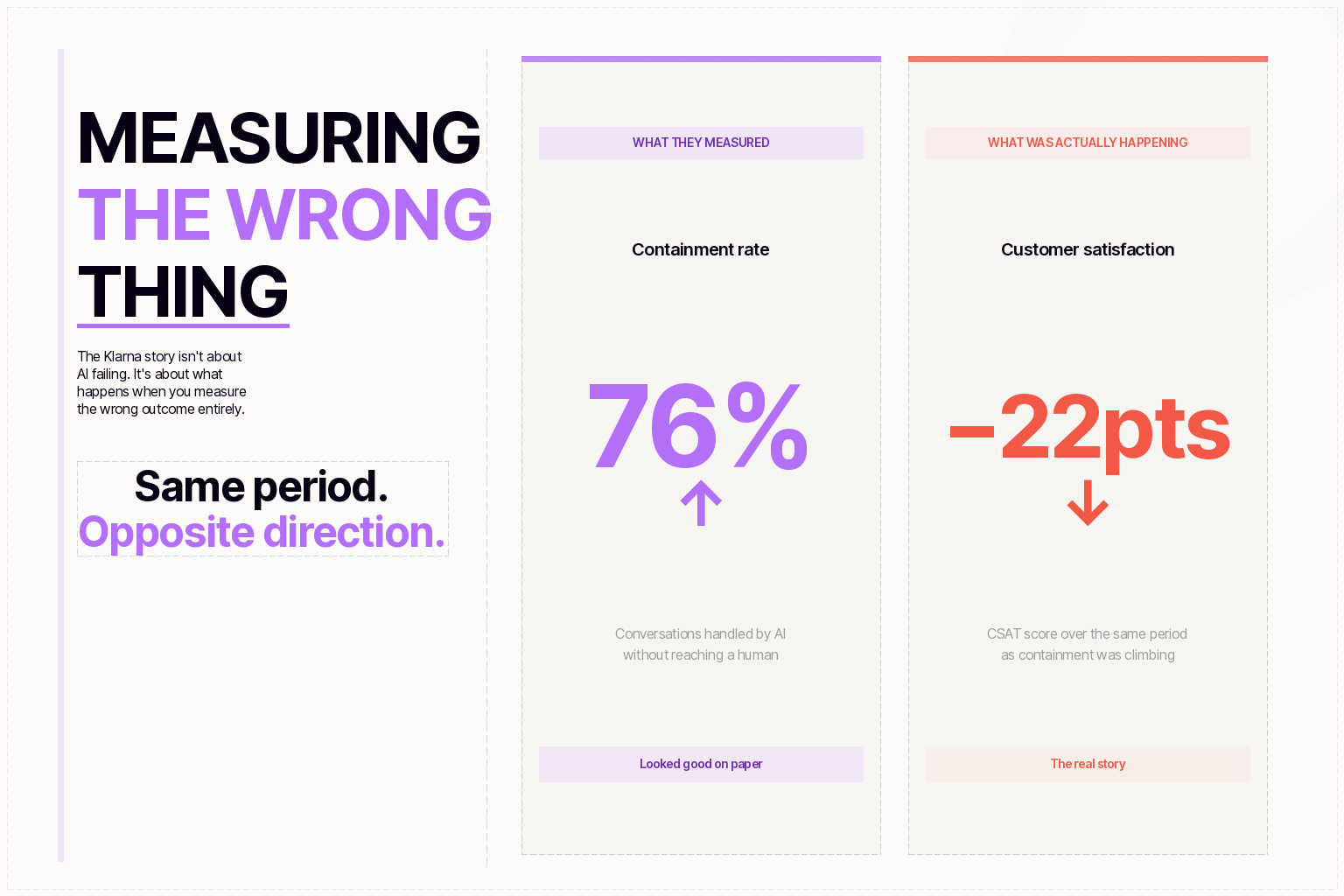

What's your re-contact rate within 72 hours? This is the metric that exposes false resolution. If a significant percentage of interactions that were marked resolved result in the same customer contacting support again within three days, the effective resolution rate is lower than the headline number. Production-focused vendors track this. Vendors who don't are measuring the wrong thing.

Can the agent take actions in my backend systems, or can it only surface information? Read-only versus read-write integration is the architectural dividing line. An agent that can tell a customer their order is delayed but can't reschedule the shipment isn't resolving the issue, it's answering a question. For most enterprise support use cases, genuine resolution requires write access to at least two or three backend systems. Ask which systems require custom development and which are available through prebuilt connectors.

How does escalation work, and what happens to context? The escalation moment is where most AI deployments create friction rather than remove it. When the agent hands off to a human, does the human receive a summary of the conversation and the customer's account history? Or does the customer start over? Test this specifically during evaluation. It's the interaction most likely to generate customer complaints, and it's the one most often glossed over in demos.

What does the agent do when it doesn't know? Graceful failure is as important as strong performance. Watch how the system handles queries outside its training, ambiguous requests, and intentional attempts to produce bad output. Vendors confident in their handling of these cases will let you probe them during evaluation. The ones who steer away from these tests usually have a reason.

How long does integration take? If the honest answer is months, that's useful information. Enterprise deployments that require extended professional services engagements before going live carry significant cost and risk. Overlay architectures that connect to existing helpdesks without requiring data migration can often go live in weeks. Ask for specific timelines from signed contract to first live interactions.

The proof-of-concept trap

POCs are nearly universal in enterprise AI evaluations, and they almost always produce better results than production deployments. This isn't a coincidence. POCs run on clean data, limited query scope, and vendor-side support. They're tuned for the evaluation. Production deployments run on the full distribution of customer queries, connected to systems that were never designed to work with AI, and managed by teams who have other jobs.

To close this gap, push for POC conditions that actually resemble production. Run it on live queries from your actual customer base, not a sample. Connect it to production-equivalent systems, not a sandbox. Run it for long enough to see performance stabilize, not just the initial tuned window. Ask what percentage of queries during the POC were within the pre-configured scope versus outside it.

The vendors who are willing to run a POC under those conditions are the ones building for production. The ones who insist on controlling the conditions closely are optimizing for the POC itself.

Compliance isn't an add-on

For most enterprise deployments, the path from POC to production runs through your security review process. This is where many AI agent evaluations stall or fail entirely. The compliance requirements for an AI agent that autonomously handles customer data, processes transactions, and takes actions in production systems are substantial.

Evaluate compliance posture early, not as a final checkpoint. Specifically: whether the vendor holds SOC 2 Type II, HIPAA BAA availability, GDPR data processing agreements, and PCI-DSS compliance for any payment-adjacent workflows. Whether audit trails are available and queryable for every agent action. Whether the vendor's subprocessors have been evaluated and disclosed. Whether the platform can operate with your data residency requirements.

Vendors with genuine enterprise compliance infrastructure will answer these questions with documentation, not assurances. The ones who treat compliance as a late-stage checkbox are the ones most likely to fail security review after you've already invested in the evaluation.

The reference call you should be asking for

Most vendor reference calls are structured to confirm positive experiences. Ask instead for a reference call with a customer who had a difficult deployment — one that required significant troubleshooting before it performed well. How the vendor handled that deployment tells you more than three smooth ones.

Specifically ask the reference: what went wrong, how long did it take to resolve, and did they get the support they needed? The answers to those questions determine whether you'll want to be working with this vendor eighteen months into a production deployment.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.