What "Deterministic Control" Actually Means for AI Agents

Deterministic control is not just a buzzword. Why it matters for AI agents in complex environments, and what it looks like in practice versus the approach it replaces.

Every few months, the enterprise AI space produces a new term that gets repeated until it loses meaning. Responsible AI. Human in the loop. Guardrails. Words that started as precise technical concepts and became marketing shorthand before anyone agreed on what they actually described.

Deterministic control is at risk of following the same path.

That's a problem. Unlike most AI governance language, it describes something genuinely specific. A design principle with real architectural implications and a measurable difference in outcome compared to the alternative. Letting it become vague costs something.

So before it does: here's what deterministic control actually means, why it matters for AI agents in complex environments, and what it looks like in practice versus the approach it replaces.

Two Ways to Govern an AI Agent

The dominant approach to AI agent governance today is probabilistic governance. The agent has broad access to systems and data. It reasons about what's relevant and appropriate in context. Output filters, guardrails, and post-generation checks catch problems after they occur. The model's judgment is the primary mechanism keeping behavior within acceptable bounds.

This works well enough in relatively uniform environments. When the population of possible interactions is predictable, the same rules apply across users and contexts, and the gap between relevant and appropriate is small, probabilistic governance produces acceptable results most of the time.

Most of the time is the problem. In high-stakes, complex, or multi-participant environments, the edge cases aren't rare, they're structural. And when they occur, probabilistic systems fail in ways that are hard to predict, hard to explain, and hard to catch before damage is done.

Deterministic control takes a fundamentally different approach. Rather than letting the agent determine what's possible and relying on downstream checks to catch mistakes, the platform defines what the agent is allowed to do before the agent starts. Access, actions, escalation thresholds, and participant-specific permissions are established upfront, as hard constraints, not as guidance the model weighs against other factors.

The agent then operates with full reasoning capability, but within a boundary that was set before it touched anything.

Why the Sequence Matters More Than the Rules

The most important thing about deterministic control isn't the rules themselves. It's when they're enforced. Most governance frameworks are applied after the fact: after the agent has retrieved information, generated a response, taken an action. Deterministic control moves the enforcement point upstream, before retrieval, reasoning, or any generation happens at all.

This distinction is more significant than it sounds.

When governance is applied post-generation, the agent has already accessed and reasoned over data it may not have been allowed to use. Even if the output gets caught and filtered, the internal process that produced it was ungoverned. At scale, post-generation filtering is playing defense against a problem that was created in the first step.

When eligibility is enforced pre-retrieval, the agent never accesses out-of-scope data. The problem doesn't get filtered out downstream — it never enters the system in the first place. The agent can't reason over data it can't see.

Shouldn't use and can't access produce very different outcomes in production. The first depends on the model reliably following instructions across every possible context. The second doesn't depend on the model at all.

The Four Questions That Have to Be Answered Before the Agent Runs

In practice, deterministic control means establishing clear answers to four questions at the start of every interaction.

Which surface is this? Is the interaction coming from a customer interface, a merchant portal, a driver app, an internal tool? The surface determines the base context for everything that follows. It shouldn't be inferred from conversational cues, it should be passed as a hard input from the platform's authentication and routing layer.

What data is eligible? Based on surface, authenticated identity, and role: which systems and data sources are in scope for this interaction? Eligibility isn't a suggestion to the agent, it's a hard filter on what can be retrieved. Data that isn't eligible isn't hidden from the agent. It simply isn't available to be retrieved. The distinction matters at the implementation level.

What actions are permitted? What can the agent actually do in this context? Issue a refund? Flag a dispute? Update an account field? Initiate an escalation? Permitted actions are defined explicitly by surface, role, and policy, not inferred from conversational intent. A customer asking whether the agent can adjust a merchant's settings doesn't put that action in scope. Permitted actions are determined by the platform, not by the content of the request.

Where's the escalation line? For every context, there should be a defined threshold beyond which the agent stops and hands off to a human. This isn't a fallback for when things go wrong, it's a design feature of the interaction model. On platforms where one decision can affect multiple participant groups, human judgment isn't a sign of AI failure. It's often the right call, and the architecture should make it easy to reach.

These four questions aren't answered by the agent. They're answered by the platform, encoded as constraints, and enforced before the agent runs.

What This Is Not

A few things deterministic control is commonly confused with, and why the distinctions matter.

It's not the same as rigid scripting. Scripted agents follow fixed flows and can't handle variation. Deterministically controlled AI agents can reason freely, handle novel inputs, and generate flexible responses, just within a bounded context. The constraint is on access and action, not on reasoning.

It's not about limiting capability. A common objection to governance frameworks is that they make agents less useful. Deterministic control doesn't reduce what the agent can do, it defines the surface it's doing it on. An agent operating within customer-eligible scope is fully capable within that scope. The boundary doesn't diminish the reasoning; it governs the environment the reasoning operates in.

It's not solved by better prompts. Prompt-based governance tells the agent how to behave. Deterministic control tells the platform what the agent is allowed to do. Prompts can be reasoned around, misinterpreted, or overwhelmed by context. Eligibility constraints enforced at the architecture layer can't be argued with.

It's not just for multi-sided platforms. Deterministic control is relevant anywhere the consequences of AI errors are asymmetric, where a mistake on one interaction affects parties who weren't part of it, where data sensitivity varies significantly by context, or where regulatory requirements make probabilistic governance insufficient. Multi-sided platforms are the clearest case, but they're not the only one.

Deterministic Control in the Real World

What does this actually look like in a deployed system?

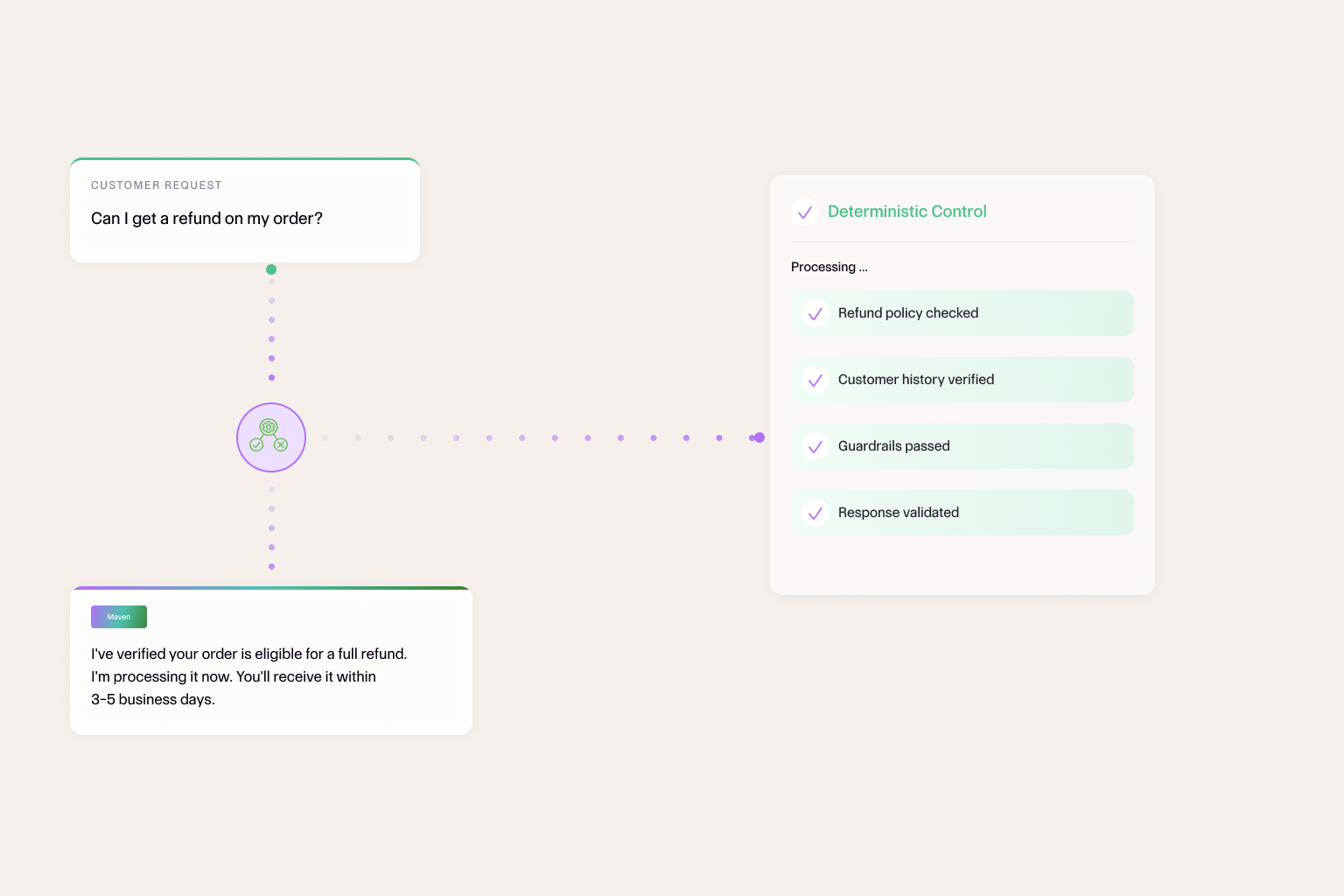

Before any interaction begins, the platform establishes context: surface, authenticated user, role, active policies. That context is passed as structured input, not as natural language in a prompt, but as hard parameters that gate what the agent can access.

Agent Maven's knowledge retrieval is scoped to eligible sources for that context. Its action set is limited to permitted operations. Its escalation thresholds are defined. None of this is visible in the conversation, it happens before the conversation starts.

Agent Maven then does what AI agents do well: understand the request, retrieve relevant information within eligible scope, reason about the best response, and act accordingly. Operating on a surface the platform designed, rather than one it discovered on its own.

The result is an agent that behaves consistently, not because it was told to, but because the architecture makes inconsistency structurally difficult.

That's what deterministic control actually means. In environments where the stakes of inconsistency are high, it's the difference between AI that scales trust and AI that quietly undermines it.

Don’t be Shy.

Make the first move.

Request a free

personalized demo.